We’ve looked at using transactions in an SSIS package to ensure that, for instance, the read data step in your package doesn’t fail after you’ve deleted the data it’s set to replace.

This is a very effective and really useful method, and it’s exceedingly flexible.

If your project spans multiple servers, though, this will require changes to the DST service settings that you might not be able to make.

There’s a simpler way, though it doesn’t offer all of the protections of wrapping your package in a transaction. We’ll take a look at that, here.

First: the problem

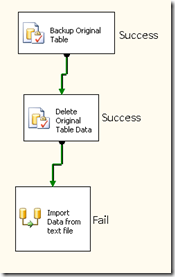

The stereotypical SSIS package looks like the one above: delete data in the destination database, then import new data into the target. The problem is also displayed above:What if you are successful in deleting the data, but then there’s a failure, either in reading the new data? This failure could be from a password change, permissions problems on the source, or, in the case of a flat file source, a nonexistent file.

In any of those scenarios, we’re faced with data that already has been deleted, but there’s nothing to replace it with.

Solutions

There are a couple of possible solutions to this problem, including using transactions or building in a failure step that would copy the data back from your backup. The first option, utilizing transactions, is certainly the most robust option. But it often requires some server-side settings that not every DBA or data analyst has access to change.The second option, building in some failure events in your job, is easy enough, but it can complicate what is an otherwise very straightforward process.

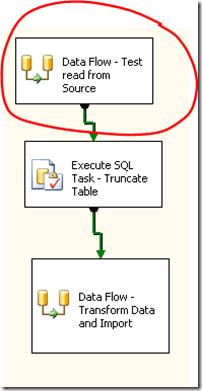

A third option is simply to test your read in a task that executes before your main task. This is the option we’ll use here, as it’s very simple, both conceptually and in implementation. Plus, it meets our basic requirements of providing a failure before data is deleted.

The details

Here’s a data flow task that we use daily. We read from the source, do some data transformations, and then write the data to a destination table. That table has already been truncated in an earlier task.

Because the truncate already has happened, if there’s an error at the source read step here, we’re in trouble.

So we create a new data flow task before our delete step. That data flow task consists of one thing only: the read from source step from the above task. Just copy and past.

We don’t actually do anything with this data, and, in fact, were it a really large data read, we could do something like retrieve the top 10,000 rows, just to be sure that all is well on our source.

As you can see, if that read step fails, now, the package fails, and no data has been lost.

While we’ve not provided bulletproof failure protection, we’ve eliminated the most likely failure scenario, and for a lot of folks, that’s a really big improvement.

• If the player and the player-dealer’s hand both comprise two aces of hearts, the arms push and no action is taken on the wager. If the vendor has the upper hand, the players lose their bets and the vendor wins that spherical. In our last section, you'll 우리카지노 be taught probably the most advanced technique for playing in} blackjack -- counting cards.

ReplyDelete